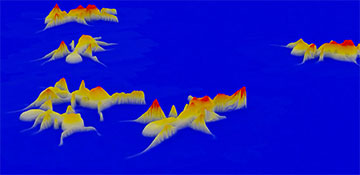

A new kind of microscope that stitches together videos from dozens of smaller cameras can provide researchers with 3D views of their experimental subjects—in this case, moving ants. [Image: R. Horstmeyer, Duke University]

To study small, moving creatures such as ants or fish larvae, microscope users typically have to pick one or two items from a list of three desirables: high speed, high spatial resolution and a wide, 3D field of view. Now a new type of imaging microscope can give biologists all three properties, according to its developers.

An interdisciplinary team in the United States created the 3D computational microscope, which captures more than 5 gigapixels of data per second (Nat. Photon., doi: 10.1038/s41566-023-01171-7). The heart of the instrument is an array of 54 synchronized cameras, which generate images that are fused together to form photometric composites with 3D height information.

Iterating the idea

Work on the multi-camera array microscope (MCAM) began about six years ago, when graduate students Roarke Horstmeyer and Mark Harfouche built the first version of their instrument out of smartphone cameras at the California Institute of Technology, USA. Both had a keen interest in computational optics techniques.

The former, now a biomedical engineering professor at Duke University, USA, and the latter, with a startup called Ramona Optics, developed several versions of the microscope, including one with 96 cameras arrayed to track the movements of zebrafish larvae.

According to Horstmeyer, the newest MCAM from his team is the first in the series to record at standard video rates or better. It contains 54 miniaturized cameras arranged in a 9 × 6 array with 1.35-cm spacing. Each camera can capture up to 700 megapixels per frame. Software called 3D-RAPID fuses the simultaneously snapped images and calculates the third dimension—the “camera-centric” heights of objects in the images. The algorithm allows users to adjust the number of cameras and the downsampling to speed up or slow down the frame rate while maintaining the computer’s maximum data throughput of roughly 5 gigapixels per second.

“In my opinion, the biggest challenge was handling the large data load, given the high throughput of our system,” says Kevin C. Zhou, who worked as a postdoc in Horstmeyer’s computational optics lab at Duke before moving to the University of California, Berkeley, USA. To efficiently perform 3D reconstructions of multiple videos, the team took a self-supervised machine-learning approach that scales for long videos of up to thousands of frames taken with many cameras (54 in this work), according to Zhou.

3D creature movements

As a demonstration of the MCAM’s capability, the team captured 3D videos of harvester ants and adult fruit flies on a flat surface and of zebrafish larvae swimming in a small dish sprinkled with food particles.

Videos of the “side view” of the moving ants show artifacts connecting the insects’ legs with the surface below, whereas in reality, there’s an open-air gap between most of the legs and the supporting surface. Zhou attributes this to the top-down position of the cameras. He says without the side-view information, it’s hard to know whether there’s a gap or not. He thinks the MCAM’s choice to artificially fill in the gaps was arbitrary, and the team could remove the artifacts.

Besides boosting the MCAM’s capabilities—resolution, speed, throughput and contrast—the team hopes to expand the microscope’s applications to fields such as drug discovery, industrial inspection and the preservation of cultural artifacts.