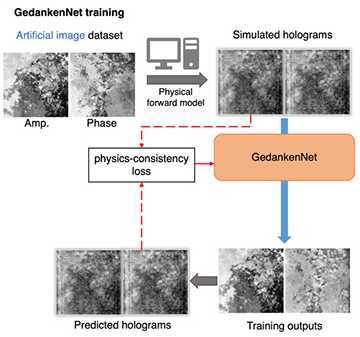

By applying a physics-based model to simulated holograms that are generated from random images, GedankenNet is able to accurately reconstruct images of tissue samples and Pap smears. [Image: Ozcan Research Lab/UCLA] [Enlarge image]

Researchers at the University of California, Los Angeles, USA, have demonstrated an alternative model for artificial intelligence (AI) that can reconstruct holographic images without the need for any training data (Nat. Mach. Intell., doi: 10.1038/s42256-023-00704-7). The self-supervised learning scheme, dubbed “GedankenNet” after Einstein’s famous thought experiment, reportedly generates images of unseen biological samples with a higher fidelity than is possible with traditional deep-learning techniques.

Artificial holograms as input data

Existing AI methodologies for image reconstruction typically rely on vast and varied training datasets, which require many different samples to be imaged and annotated. However, these supervised learning approaches can struggle to process experimental specimens that are not adequately represented in the training dataset or that have been imaged using different experimental setups.

In contrast, the input data for GedankenNet are artificial holograms that are generated at random, without the need for any experimental data or knowledge of real-world samples. The self-supervised learning scheme exploits a physical model of light propagation to calculate predicted holograms, and then it directly compares these predictions with the input holograms to refine the parameters of the neural network.

Physics-based approach

GedankenNet was found to reconstruct the images with high fidelity, offering greater accuracy than could be achieved with conventional deep-learning models that had been trained with experimentally acquired holograms for different tissue types.

Once the model had been trained, the team tested its performance using 3D holographic images of human tissue samples and Pap smears. GedankenNet was found to reconstruct the images with high fidelity, offering greater accuracy than could be achieved with conventional deep-learning models that had been trained with experimentally acquired holograms for different tissue types.

The team also tested the performance of the two types of model when each was trained with both a general dataset of natural images and typical experimental results from samples of lung tissue. In this case, the supervised model produced better image reconstructions of the lung tissue when it was trained using the matching experimental dataset. But GedankenNet’s physics-based approach consistently outperformed the traditional supervised model when more general training data were used.

Unlike other AI techniques that have been used for image reconstruction, the physics-based model was also shown to produce results that are consistent with Maxwell’s equations. When tasked with reconstructing experimental holograms that had been defocused in the axial plane, the model delivered a physically consistent output of defocused holograms rather than the random hallucinations that are usually produced.

“These findings illustrate the potential of self-supervised AI to learn from thought experiments, just like scientists do,” said team leader Aydogan Ozcan. “It opens up new opportunities for developing physics-compatible, easy-to-train and broadly generalizable neural-network models as an alternative to standard, supervised deep-learning methods currently employed in various computational imaging tasks.”