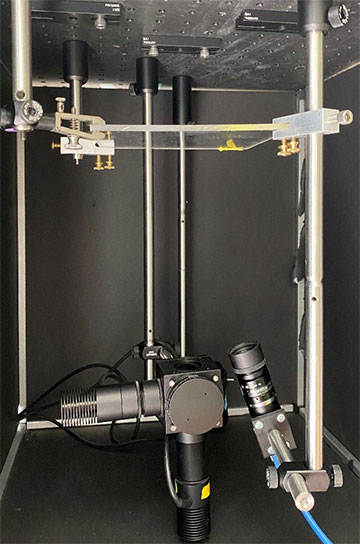

Researchers have developed a low-cost, simple imaging system that uses LEDs and a CMOS camera to determine the depth of tumor cells in the body. The portable system could eventually be used during surgery to distinguish between healthy and cancerous tissue when removing a tumor. [Image: C.M. O’Brien, Washington University in St. Louis]

For cancer surgery, oncologists always seek better visual imaging tools to help them distinguish tumors from healthy tissue while the patient is still on the operating table. Ideally, these systems would give surgeons data on the depth of cancerous tissue as well as its length and width, but the few schemes for achieving this are expensive and bulky.

Now researchers at a US university have developed a streamlined dual-wavelength excitation fluorescence imaging system that delivers the necessary depth information (Biomed. Opt. Express, doi: 10.1364/BOE.468059). The new method achieved high accuracy in tests both on chicken tissue and in vivo in mice, but it still awaits full clinical trials.

Improving past approaches

The researchers at Washington University in St. Louis (WUSTL), USA, extended some previous work on near-infrared (NIR) fluorophores and fluorescence-guided surgery done in the laboratory of Optica Fellow Samuel Achilefu. Achilefu worked on cancer-imaging systems at WUSTL for nearly three decades until he moved to the University of Texas Southwestern Medical Center, USA, in early 2022.

The WUSTL experiments also build on the concept of ratiometric fluorescence imaging, which, the researchers write, “takes advantage of the wavelength-dependent attenuation of light in tissue to provide depth information independent of fluorophore concentration.” These were the first such experiments to incorporate an NIR fluorophore into the imaging system. This fluorophore, designated LS301—and developed in Achilefu’s WUSTL lab—gets excited when hit by light of wavelengths between 650 and 810 nm and binds to a type of protein that is overexpressed in many solid tumors. Using the broad-spectrum LS301 eliminated the need for multiple fluorophores.

Dual-wavelength system

After injecting the fluorophore into the target tissue, the researchers, led by WUSTL assistant professor Christine O’Brien, aimed 730- and 780-nm LEDs at the material to excite the substance and an 850-nm LED at it to provide a bright-field image for context. Diffuse reflectance spectroscopy helped the team test the optical properties of the system.

Signal-to-noise ratio of in vivo standard 780-nm excited fluorescence (left) and NIR dual-wavelength excitation fluorescence (right) taken from tumor-bearing mice. [Image: C.M. O’Brien, Washington University in St. Louis] [Enlarge image]

The first target “tissue” was actually a polyurethane-based mixture of synthetic materials, designed and molded to have roughly the same optical properties as mammalian tissue. Next, the team purchased chicken breast meat from the supermarket and checked how it performed when subjected to the fluorophore and LED light. Finally, the researchers performed the experiment on anesthetized mice with subcutaneous breast cancer. The method captured depth information on the mouse tumor with an average error of only 0.36 mm.

The biggest challenges of the work, according to O’Brien, were making accurate measurements of optical properties and working with non-flat tissue samples (the chicken and the mouse).

To be useful to surgeons, O’Brien says, the system needs further automation so that it can scan the entire tissue surface. “In addition, we intend to implement a 2D strategy for optical property retrieval that will further facilitate measurement automation and rapid time to results for surgical guidance,” she says. “We have already begun testing this system with breast cancer patients undergoing lumpectomy, but additional automation as described above would be needed before a full clinical trial.”