Two research teams have developed methods for creating giant entangled cluster states of light that could offer a route to an alternative to the circuit-based model for universal quantum computing. [Image: Shota Yokoyama, 2019]

While universal quantum computers are likely still a long way off, the drive to develop them has scored some impressive wins in recent years, on platforms ranging from superconducting circuits to laser-trapped lattices of ions or atoms. Much of the work has focused on “quantum circuit” or “logic gate” architectures, analogous those found in classical machines. But scaling such systems up to more than a handful of quantum bits (qubits), while keeping error rates low, constitutes a major experimental and practical challenge.

Last week, two separate research groups published papers that point to a very different model of quantum computing—one in which scalability is, in a sense, baked in at the start (Science, doi: 10.1126/science.aay2645), 10.1126/science.aay4354). The two groups both used a combination of quantum “squeezed light” and straightforward optical components to create massive, quantum-entangled states of light known as 2-D cluster states.

These extensive “entanglement resources” could form the foundation for an alternative to the quantum circuit model—so-called measurement-based or one-way quantum computing. And that alternative, its proponents argue, could avoid some of the circuit model’s complexities, offering a more direct, scalable pathway to universal, fault-tolerant quantum computers.

Scaling challenges

In the circuit- or logic-gate-based approach to quantum computing, arrays of qubits proceed through a series of unitary operations in quantum gates, with a final measurement at the end providing the output of the program. It’s a familiar model—but one that requires sequentially preparing, interfacing and maintaining large numbers of individual, high-quality qubits as the program proceeds, and keeping their delicate quantum information intact long enough for the machine to do something useful.

That’s made scaling these systems up to large numbers of qubits a challenging proposition. Researchers have also worked with other models of quantum computing, including adiabatic and topological computing, which have advantages for certain types of problems.

One-way ticket

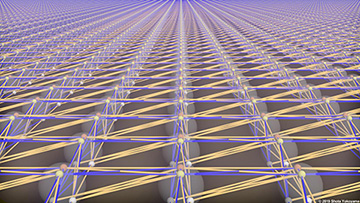

Artist rendering of a 2-D cluster state. [Image: Jonas S. Neergaard-Nielsen / Technical University of Denmark]

Still another model, measurement-based or one-way quantum computing, approaches scalability in a very different way. First proposed nearly two decades ago by Robert Raussendorf and Hans Briegel, one-way computing begins with the preparation of a giant, highly entangled “quantum resource” state, called a cluster state, in which all qubits in the calculation have been interconnected in advance.

The computation then proceeds in a series of local, single-site measurements, in a specific order and quantum basis; the outcome of each measurement is logged classically and becomes an input for the next quantum measurement basis on the cluster state. In this approach, the quantum algorithm is encoded in the pattern of individual measurements. The program’s final result is given by the classical data from the individual quantum measurements, and the initial cluster state is destroyed in the course of the computation. “Cluster states are thus one-way quantum computers,” as Raussendorf and Briegel put it in their initial paper, “and the measurements form the program.”

Cluster state challenges

In principle, many researchers believe that one-way quantum computing offers a potentially more scalable approach than the quantum circuit model. That’s because the one-way approach relies on simple single-site measurements. It thus reduces the scaling problem to finding a way to create a sufficiently large cluster state at the front end to act as an adequate resource for the computation, rather than managing multiple quantum interactions between growing numbers of individual qubits.

But creating such large cluster states has had challenges of its own. A particular one is that, for universal quantum computation, it turns out that the “topological structure” of the cluster-state entanglement must be at least two-dimensional. And most demonstrations of cluster states on existing platforms, such as ion traps and solid-state superconducting systems, have been only one-dimensional.

Light-based 2-D cluster states

The two research groups involved in the newly published studies—one based in Denmark, and the other involving scientists in Japan, Australia and the United States—have now demonstrated a different approach to creating cluster states, using highly entangled lattices of light.

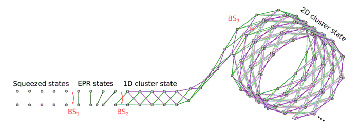

The new studies begin with squeezed states of light, and use a variety of optical elements to weave those states into a 2-D custer state of tens of thousands of entangled nodes. This schematic shows the approach of the Danish group. [Image: Technical University of Denmark] [Enlarge image]

While some of the details of the two studies differ, both begin with the creation of squeezed states of light. In a squeezed state, the measurement error in one quantum parameter or “quadrature” describing a light field (for example, amplitude) is slimmed down by allowing the error in the other, less relevant quadrature (for example, phase) to expand. This quantum-mechanical trick is beginning to find its way into designs for applications such as sensors that can reduce errors to below the shot-noise limit.

In both of the new studies, once the initial squeezed states are created, they are passed through networks of beam splitters, delay lines and other optical components to be “woven into” a cylindrical, 2-D blanket of highly entangled light states. The result is a purely optical 2-D cluster state, consisting of thousands of entangled optical modes—24,960 modes in the work from Japan–Australia–U.S. group, and more than 30,000 in the Danish work.

Further, the teams believe that their setups are sufficiently simple to be scalable to much larger numbers. And the Danish group adds that its system operates at room temperature in optical fibers at telecom wavelengths of 1550 nm. That, the team believes, offers a distinct advantage over superconducting platforms that must operate at chilly cryogenic temperatures.

Next step: Error control

Some optical devices used in the Japan–Australia–U.S. setup. [Image: CQC2T]

With the creation of these vast entangled light states, both research groups believe they’ve laid the groundwork for universal one-way quantum computing, as a more naturally scalable alternative to the predominant circuit-based model. But a hurdle still to be cleared—as with other efforts toward universal quantum computing—is fault tolerance. While the use of squeezed light helps to hem in some potential errors, both groups admit that the large cluster states are still too noisy to support universal quantum computing out of the box.

The two teams see different ways to get around this problem. The Japan–Australia–U.S. team believes that improving squeezing levels using state-of-the-art squeezed-light sources could bring the assemblage within the error threshold for fault-tolerant computing. And the Danish group sees promise in adapting an error-correcting encoding that has been successfully demonstrated in the microwave regime (so-called GKP state encoding) to optical frequencies.

In either case, the authors of the two studies are optimistic that their demonstrations open up a new pathway for quantum computing with light. In a press release accompanying his team’s work, Ulrik Andersen, the leader on the Danish effort, called group’s cluster state “a potential candidate for the next generation of larger and more powerful quantum computers.”

The Japan–Australia–U.S. study included scientists from the University of Tokyo, Japan; the University of New South Wales and RMIT University, Australia; and the University of New Mexico, USA. The Danish study took place at the Technical University of Denmark.