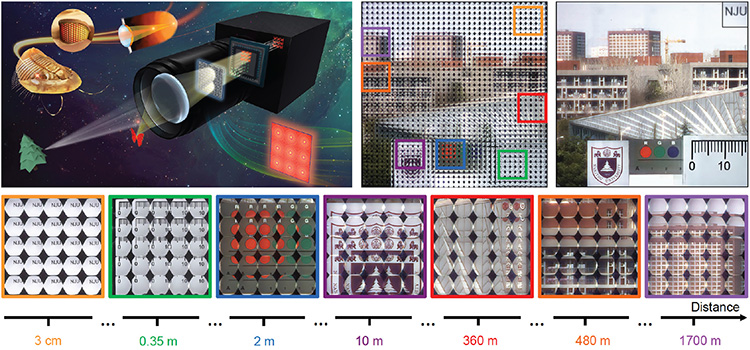

Top left: Conceptual schematic of trilobite-inspired light-field camera with bifocal metalens array. Top middle: Captured light-field image of the whole scene under natural light after aberration correction. Top right: Aberration-corrected all-in-focus image after rendering. Bottom: Zoomed-in sub-images of objects at different distances corresponding to the marked ones in the captured light-field image.

Top left: Conceptual schematic of trilobite-inspired light-field camera with bifocal metalens array. Top middle: Captured light-field image of the whole scene under natural light after aberration correction. Top right: Aberration-corrected all-in-focus image after rendering. Bottom: Zoomed-in sub-images of objects at different distances corresponding to the marked ones in the captured light-field image.

Light-field imaging systems measure the full 4D representation of light, and can encode color, depth, specularity, transparency, refraction and occlusion of the scene.1 Depth-of-field (DoF) and spatial resolution are two key system parameters in light-field photography, and achieving large DoF without compromising spatial resolution remains an outstanding challenge. In work this year, inspired by the bifocal compound eye of the trilobite Dalmanitina socialis,2 we demonstrated a single-shot nanophotonic camera incorporating a spin-multiplexed metalens array able to achieve high-resolution light-field imaging while exhibiting a record DoF.3

The proposed spin-multiplexed metalens array provides two completely decoupled transmission modulations to a pair of orthogonal circular polarization inputs, and thus can simultaneously capture light-field information from both close and distant depth ranges while maintaining high lateral spatial resolution. Furthermore, we introduced a multiscale deep convolutional neural network to computationally eliminate optical aberrations from the captured image, which significantly relaxed the design and performance requirements on the metasurface optics.

To clearly illustrate the capability of light-field imaging over extreme DoF by the proposed camera, we selected a scene covering an enormous depth—the nearest object being a piece of glass patterned with opaque characters “NJU,” at 3-cm depth from the primary lens aperture, and the farthest being a high-rise building 1.7 km away. As expected, the proposed light-field camera enabled full-color, in-focus imaging of both near and far objects. Zoom-in sub-images clearly show that the aberration-correction neural network can eliminate blurry effects originating from optical aberrations attributable to the metalens array. By further using the reconstruction algorithm, a clear and sharp image of the whole scene can be obtained, covering a record, extreme DoF.

We envision that such integration of nanophotonics with computational photography will stimulate the development of novel optical systems for imaging science that go well beyond traditional light-field imaging technology.

Researchers

Qingbin Fan, Yanqing Lu and Ting Xu, Nanjing University, Nanjing, China

Wenqi Zhu and Amit Agrawal, National Institute of Standards and Technology, Gaithersburg, MD, USA

References

1. I. Ihrke et al. IEEE Signal. Proc. Mag. 33, 59 (2016).

2. J. Gal et al. Vision Res. 40, 843 (2000).

3. Q. Fan et al. Nat Commun. 13, 2130 (2022).