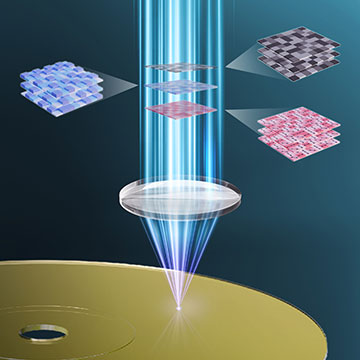

Researchers have developed a new type of holographic data storage that they describe as 3D, encoding and decoding data using the amplitude, phase and polarization of laser beams. [Image: Xiaodi Tan, Fujian Normal University, China]

Scientists in China have reportedly shown how to increase the capacity of holographic data storage by encoding light’s polarization as well as its amplitude and phase (Optica, doi: 10.1364/OPTICA.586593). Their scheme relies on a neural network to pull apart the three different properties from two distinct intensity patterns—one generated using polarized light and the other not.

Storing data holographically

Holographic data storage can potentially store data at higher densities than conventional magnetic and optical techniques thanks to multiple planar holograms being recorded in a three-dimensional medium. As with other types of holography, information is written to the medium by interfering two laser beams—one carrying the data and the other serving as a reference. Data are then read out by directing the same reference beam to the correct portion of the medium, recreating the wavefront of the original information-carrying beam.

Storage capacity can be further optimized by encoding data in a beam’s phase as well as its amplitude. But in the latest work, Xiaodi Tan and colleagues at Fujian Normal University do one better by adding a third dimension to the encoding process—a light wave’s polarization. They show they can do this without needing to add an interferometer or polarization camera to a conventional CMOS sensor to extract the phase and polarization information. They instead use deep learning to extract the three components from holograms they create in a two-step process.

The researchers showed it was possible to retrieve the values of amplitude, phase and polarization recorded in the superimposed holograms by using a convolutional neural network to map these values to specific patterns of light intensity.

Two-step holograms

The first writing step involves interfering two halves of a laser beam on a recording medium: The data carried on a right-hand circularly polarized beam is encoded using a phase-only spatial light modulator, while the reference beam is left-handed. The second step repeats this process with both beams in the left-handed state.

The researchers showed it was possible to retrieve the values of amplitude, phase and polarization recorded in the superimposed holograms by using a convolutional neural network to map these values to specific patterns of light intensity. Their scheme involves splitting the diffracted light produced in the data-reconstruction process so that one half travels straight to a CMOS detector while the other reaches a second detector after passing through a polarizer.

These two intensity patterns—one representing amplitude only and the other depending also on polarization—serve as the inputs to the neural network, which they call TriDecode-Net. The network has three outputs corresponding to the three components of light and is trained by the researchers feeding it a series of intensity patterns while recording its outputs. The difference between these outputs and the “true” trio of values serves to tweak the connections between the network’s neurons so that with further iterations it becomes better and better at matching output values to input patterns.

More storage

The researchers carried out experiments using a recording medium made from a polarization-sensitive photopolymer, generating 120 sets of intensity patterns along with their corresponding values of amplitude, phase and polarization. They used 100 of these sets to train TriDecode-Net and the remaining 20 to test it; and found that the network scored high marks, recording errors in fewer than 3% of pixel values for each of the three parameters, with each parameter being assigned one of three values on a pixel-by-pixel basis.

“These results demonstrate that TriDecode-Net can precisely reconstruct multidimensional optical information with high accuracy and robustness, even when trained on a limited dataset,” write the researchers.

Tan and colleagues argue that the greater storage capacity of their new scheme could in future be used to reduce the size of data centers and render archival storage more efficient. They also reckon the technology could be applied to optical encryption, high-volume communication and advanced forms of imaging.

Before they can commercialize the research, however, they say they need to raise the number of encoding levels for each pixel by improving laser modulation, recording medium stability and decoding fidelity. They also plan to show how their scheme can be incorporated into established multiplexing techniques such as those based on recording angle or wavelength.