![]()

A team of researchers in the United States and Germany have developed a neural-net computational approach that can quickly infer, from low-resolution measurements of X-ray free-electron laser pulse power (gray shadow), both the real (red) and complex (blue) elements of the full field (white), enabling pulse-by-pulse measurement of detailed amplitude and phase at MHz rates. [Image: SLAC National Accelerator Laboratory]

X-ray free-electron lasers (XFELs), producing pulses with durations on the femtosecond (10–15 s) scale, have opened a fascinating new view on everything from protein chemistry to the motion of individual atoms. For simplicity, most such XFEL studies assume average values for the light’s amplitude and phase across all pulses in the experiment. Conceivably, a more precise view of those parameters, specific to each pulse, could enable new kinds of high-resolution XFEL pump–probe experiments. But getting such full-field data on a pulse-by-pulse basis is already a tall order, and will become even harder as the repetition rates of these powerful light sources increase toward the megahertz (MHz) level.

Now, researchers at two marquee XFEL facilities—the SLAC National Accelerator Laboratory in the United States, and the Deutsches Elektronen-Synchrotron (DESY) in Germany—have devised a method for recovering the amplitude and phase of individual XFEL pulses in real time, using a combination of computer machine learning and physics-based inference (Opt. Express, doi: 10.1364/OE.432488). And the approach is fast enough, according to the team, to “run at the full beam rate of future MHz XFELs.”

Iterative process

As direct full-field measurement of X-ray pulses generally isn’t feasible, the researchers behind the new study focused on an indirect approach: inferring the full complex field of the X-ray pulse from (inevitably corrupted) measurements of pulse power in the time and spectral domains. This boils down to an inverse problem. Solving such a problem starts with a “best guess” of the complex field (pulse amplitude and phase) that would correspond to the power measurements; plugging the guess into a mathematical forward model relating the complex field to temporal and spectral power; and iteratively tweaking the complex-field guess until the predictions of the forward model agree with the measured power.

The problem, though, is that even with a fast computer, the iterative process takes seconds to converge to a solution, and needs to be repeated from the ground up for each new pulse. That’s impractical even at current repetition rates—and will become even more so as XFELs upgrade to producing a million pulses a second, and tens of billions of pulses in a single experiment.

Machine-learning approach

To sidestep that hopeless lack of scalability, the team turned to machine learning, putting the inverse-mapping problem in the hands of a computational neural network. In such an approach, the computer would be trained in advance to infer the amplitude and phase from the power measurements—essentially packing the interative part of the process into the advance training of the system. The training itself would be computationally laborious and time-consuming. But once the computer was trained, inferring the amplitude and phase from the power measurements should require only a single pass through the network, allowing a near-real-time readout of the full field.

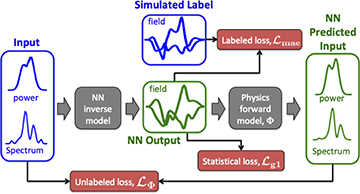

The neural-network training flow devised by the team supports training by comparing results against real or simulated labeled data (supervised learning), or by using unlabeled experimental data and minimizing losses against a forward physical model (unsupervised learning). [Image: D. Ratner et al., Opt. Express, doi: 10.1364/OE.432488 (2022)] [Enlarge image]

The specific flavor of neural network used by the team is a so-called generative adversarial network (GAN). This kind of computational approach basically pits two neural networks against each other: a “generator,” which generates random candidate solutions; and a “discriminator,” which attempts to decide whether the example comes from the generator or from a training set of real data. In the case of XFELs, as the discriminator, the team used the same physical forward model, relating the complex field to temporal and spectral power, that would be used in the more conventional, time-consuming iterative inverse-modeling approach.

This meant that the system could be trained either in the familiar “supervised” manner, in which the computer learns by comparing its results with a training set of “labeled” data, or in an “unsupervised” manner, using the output of the forward model, without the need for a training set. The latter approach, in turn, could allow the system to be trained directly with unlabeled experimental data—a potentially powerful advantage. Either way, once the system was trained, it should be able to quickly infer the complete field—phase and amplitude—from arbitrary input pulse-power data.

Blazing speed

In tests of the system, the researchers found that, after training, the network could recover the amplitude of individual X-ray pulses with three times the accuracy of the existing iterative method, and could also pull out the phase of individual pulses. Even more important, it could do these inferences blazingly fast, at millions of times the rate of the iterative approach. That, the team concludes, opens up the potential to harvest full-field data in real time, pulse by pulse, from the MHz-repetition-rate XFELs that will soon become available.

Such fast, high-resolution measurements have the potential to unlock new science from XFELs in areas such as protein structure, quantum mechanics and materials. That said, however, the team acknowledges that there are some additional hurdles to clear. A big one is that, while the neural networks can do their analysis at MHz rates, at present the rate at which the input power data that feed the network are generated is a much slower 120 Hz. “Taking full advantage of the [neural network] speed,” the researchers write, “will require development of new diagnostics” that produce the input data at a much faster rate.